Resources

Webinars

4x Faster Spark on Your Own Infrastructure: Bringing Quanton to Self-Managed Kubernetes

Drop-in Spark acceleration for self-managed Kubernetes clusters – no code changes, no managed service, no compromises.

Lakehouse, Finally at Database Speeds: Introducing Onehouse LakeBase™ for the AI Era

A Postgres-compatible serving endpoint for Apache Iceberg™ and Apache Hudi™, with intelligent indexing and caching for millisecond lookups and fast analytics at high concurrency.

The Apache™ Spark Job That Wouldn’t Retry

Stop 2 A.M. firefighting caused by brittle retries and surprise downstream triggers, and adopt orchestration techniques that make Spark failures observable, recoverable, and safe to re-execute.

How to Cut AWS EMR Compute Costs by 70%+ While Accelerating Data Pipelines

A practical framework for identifying Apache Spark™ inefficiencies, optimizing autoscaling, and achieving 3-4x better price-performance across your EMR workloads.

The True Cost of Apache Spark™: How Enterprises Slash Spend by 50% or More

Stop overpaying for Spark; learn how the biggest cost leaks happen and how to fix them.

Uncovering Hidden Bottlenecks: How to Cut Apache Spark™ Compute Costs by 50%+

A step-by-step look at where Spark wastes compute and what engineers can do to reclaim performance and budget.

Cost Analyzer for Apache Spark™ Live Demo: Find Hidden Bottlenecks In Minutes, Unlock 2-3x Performance

See how to pip install the free Cost Analyzer in minutes and find 50%+ savings opportunities in your Spark jobs.

Cut Your Apache Hudi™/Apache Spark™ Costs: From DIY Fixes to Engine-Level Optimizations

Practical ways to optimize your tables and unlock 30–70% savings in Spark/EMR compute spend without sacrificing performance.

dbt + Onehouse = 50% cheaper Spark SQL Pipelines

Ingest Once. Transform Once. Query Anywhere with Snowflake, Databricks, and all other Data Platforms

Introducing Quanton Engine from Onehouse

Most SQL engines are generic and stateless - they have a singular focus on optimizing compute for query execution through standard techniques. Join this webinar to learn how Quanton intelligently exploits a key observation in ETL workload patterns to minimize the work performed in each ETL run rather than naively attempting to run as fast as possible.

Your Hudi. Amplified.

Congrats on choosing Apache Hudi™ as the backbone of your data lakehouse! Join this webinar to learn about the new features in recent Hudi releases, how others are using them, how you can upgrade, and how Onehouse can help.

Introducing Open Engines™: Your Data. Your Compute Engine. Your Choice.

The Universal Data Lakehouse delivers interoperability and openness, from ingestion to table formats to compute engines. Now, with Open Engines, Onehouse makes it simple to apply open source engines for stream processing, BI reporting and analytics, and data science, AI, and ML to your lakehouse tables.

Table Format != Data Lakehouse: Breaking Down the Components of the Lakehouse

Open table formats - Apache Hudi™, Apache Iceberg, and Delta Lake - are all the rage. But lately they have become synonymous with data lakehouses. Turns out there is a lot more to a data lakehouse than only a table format. Join this webinar, where we dissect the key components of the data lakehouse architecture and how table formats fit.

Optimizing Apache Hudi & Spark Pipelines to Achieve 10X Performance at 1/2 the Cost

Spark is the processing engine of choice for running Hudi data lakehouse pipelines. Join this webinar with Kyle Weller and Rajesh Mahindra of Onehouse to learn how customers are accelerating Hudi and Spark for 10x performance or more at half the cost of other solutions.

Introducing Onehouse Compute Runtime: Accelerated Spark Runtime for Apache Hudi™, Iceberg and Delta Lake

Join Vinoth Chandar and Kyle Weller to learn how Onehouse Compute Runtime automates optimizations for 2-30x faster queries and 80% infrastructure cost reductions.

Bridging the Gap: A Database Experience on the Data Lake

Join this fireside chat and Q&A with Vinoth Chandar and Ananth Packkildurai of Data Engineering Weekly to learn about Apache Hudi™ 1.0, the origin of Hudi, and the future of data lakehouses and data catalogs.

Automated Performance Tuning for Apache Hudi™ on Amazon EMR

Learn from the Hudi experts on how you can optimize and accelerate your data lakehouse on Amazon EMR.

Implementing the fastest, most open data lakehouse for Snowflake ETL/ELT

Learn how you can leverage the most open data lakehouse to ingest, store, and transform data for Snowflake at a fraction of the cost, while enabling end-users to access data in Apache Iceberg, Apache Hudi, and Delta Lake formats.

Deliver an Open Data Architecture with a Fully Managed Data Lakehouse

Join this webinar to learn how Conductor and other organizations have rearchitected their data stacks with an open data platform to ensure that they can always work with any upstream data source and downstream query engine, future-proofing their stack to support the current wave of GenAI use cases - and whatever comes next.

Vector Embeddings in the Lakehouse: Bridging AI and Data Lake Technologies

Join NielsenIQ and Onehouse to explore the crucial role of vector embeddings in AI, and discover how Onehouse makes it easier than ever to generate and manage vector embeddings directly from your data lake.

Introducing Onehouse LakeView and Table Optimizer - Power Tools for the Data Lakehouse

Learn about LakeView, a free data lakehouse observability and management tool, and Table Optimizer, a managed service to optimize your data lakehouse tables in production.

Iceberg for Snowflake: Implementing the fastest, most open data lakehouse for Snowflake ETL/ELT

Learn how to ingest, store and transform your Snowflake data faster and for a fraction of the cost using fully-managed Iceberg tables.

NOW Insurance Uses Data and AI to Revolutionize an Industry

With Onehouse, NOW Insurance is Harnessing Data, Cutting Costs, and Driving Innovation

Universal Data Lakehouse: User Journeys from the World's Largest Data Lakehouse Users

Streaming Ingestion at Scale: Kafka to the lakehouse

Scale data ingestion like the world’s most sophisticated data teams, without the engineering burden

Implementing End-to-End CDC to the Universal Data Lakehouse

Learn how to replicate operational databases to the data lakehouse in a manner that is easy, fast, cost-efficient, and opens your data to multiple downstream engines

The Onehouse Universal Data Lakehouse Demo and Q&A

Learn all about the benefits of the universal data lakehouse architecture and see Onehouse in action for use cases such as Postgres change data capture!

OneTable Introduction and Live Demo

Tired of making tradeoffs between data lake formats? Learn how OneTable opens your data to any - or all - formats including Apache Hudi™, Delta Lake and Apache Iceberg, and see a live demo!

Hello World! The Onehouse Universal Data Lakehouse™ Demo and Q&A

Join Onehouse Founder and CEO Vinoth Chandar for an overview and demo of the Onehouse platform

Hudi 0.14.0 Deep Dive: Record Level Index

Nadine Farah, an Apache Hudi™ Contributor, and Prashant Wason, the release manager for Hudi 0.14.0, delve deep into the groundbreaking record-level index feature

Deep Dive: Hudi, Iceberg, and Delta Lake

Join Onehouse Head of Product Management Kyle Weller as he discusses the ins and outs of the most popular open source lakehouse projects

Open Source Data Summit

Join thought leaders from Onehouse, AWS, Confluent, Uber, Walmart, Tesla, Netflix and more as they discuss how open source projects have taken over as the standard for data architectures at companies of all sizes

White Papers

2025 Market Study: Modern Data Architecture in the AI Era

The AI data architecture revolution is happening now—and your competition is already moving. A groundbreaking 2025 study reveals 85.3% of enterprises have secured GenAI budgets, with 82.6% racing to deploy by year-end.

Apache Hudi: The Definitive Guide

Whether you've been using Hudi for years, or you’re new to Hudi’s robust capabilities, our early-release chapters of this O'Reilly Guide will help you build robust, open, and high-performing data lakehouses.

Apache Hudi: From Zero to One

Apache Hudi™ helped power Uber to global leadership. Organizations large and small, from Amazon to Walmart, have joined in, helping to create one of the livelier and more effective open source projects. Learn about Hudi's storage format, versatile capabilities in flexible reads and writes, its robust table services, and Hudi Streamer.

NOW Insurance’s Data Journey with Onehouse: Streamlining for Growth

NOW Insurance is a rapidly growing pioneer in the insurtech space. Read about why they chose the Universal Data Lakehouse architecture - and partnered with Onehouse to make it happen, fast.

The Journey to the Universal Data Lakehouse

Apna, Notion, Uber, Walmart, Zoom. What do these companies have in common? Aside from their businesses generating massive volumes of data - at high velocity - all of their teams have chosen the universal data lakehouse as a core component of their data stack and pipelines.

Building a Universal Data Lakehouse

You shouldn’t have to move copies of data around, never knowing which is the real source of truth for different applications such as reporting, AI/ML, data science, and analytics. Learn how the universal data lakehouse architecture architecture is reshaping how businesses like Uber, Walmart, and TikTok handle vast and diverse data with unparalleled efficiency.

Hudi vs. Delta Lake vs. Iceberg Comparison

The data lakehouse is gaining strong interest from organizations looking to build a centralized data platform. Many are struggling to choose between the three popular lakehouse projects: Hudi™, the original data lakehouse developed at Uber; Iceberg, developed at Netflix, and; Delta Lake, an open source version of the Databricks lakehouse. Learn about the goals and differences of each project.

Data Sheets

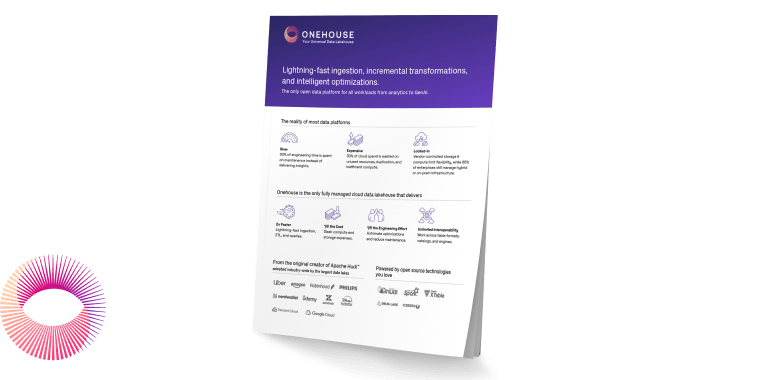

Onehouse for Spark

Discover how Onehouse delivers 2-3x better price/performance for Apache Spark™ ETL pipelines versus competing solutions.

Onehouse Iceberg for Snowflake

Learn how Onehouse delivers Apache Iceberg™ tables to Snowflake for a truly open data architecture at 60% lower cost for teams who wish to keep control of their data and avoid proprietary formats.

Onehouse Data Integration Guide

Onehouse Data Integration Guide -- Explore Onehouse’s comprehensive guide to discover a wealth of data integration and connector options tailored to your needs.

Introducing the Onehouse Universal Data Lakehouse

Combine the scalability and flexibility of data lakes with the stability and accessibility of data warehouses, and open it to your entire ecosystem.

Onehouse + Confluent = Limitless Real-Time Workloads

Build realtime workloads in minutes to power use cases across your entire ecosystem, including change data capture, analytics, AI and ML, and more.

Events

Demos

Video Overview: Introducing the Onehouse Universal Data Lakehouse

Onehouse builds on the data lakehouse architecture with a universal approach that makes data from all your favorite sources - streams, databases, and cloud storage, for example - available to all the common query engines, languages, and data lakehouse formats your data consumers use every day.

Ingest PostgreSQL CDC data into the lakehouse with Onehouse and Confluent

See how you can replicate Postgres tables into the lakehouse using Onehouse's new Confluent CDC source. This demo showcases fully automated integration with Onehouse and Confluent to provision and manages resources in Confluent to facilitate CDC data ingestion into the lakehouse.

Solution Guides

Onehouse Clustering Best Practices

This guide explains clustering strategies such as linear ordering, Z-Ordering, and Hilbert curves for Onehouse and Apache Hudi™. It covers optimal use cases for each clustering strategy, deployment options, and best practices.

Onehouse Catalog Sync for Databricks Unity Catalog

This setup guide walks you through connecting Onehouse’s metadata catalog with Databricks Unity Catalog, ensuring a unified and secure data governance experience across AWS and Databricks. It covers everything from creating a Databricks workspace and Unity Catalog to configuring IAM roles, S3 buckets, and external locations for seamless data storage and syncing.

Bring Your Own Kafka for SQL Server CDC with Onehouse

This guide describes how to implement fully-managed change data capture (CDC) from a SQL Server database to a data lakehouse, using Confluent Cloud’s managed Kafka Connect, Confluent Schema Registry, and Onehouse.

Onehouse Managed Lakehouse Table Optimizer Quick Start

Read this guide to learn how to leverage integrated table services from Onehouse for optimizing read and write performance of Apache Hudi™ tables. Compaction, clustering, and cleaning are supported out-of-the-box features for Hudi tables optimized by Onehouse.

Synchronize PostgreSQL and your Lakehouse: CDC with Onehouse on AWS

In this guide, we will show you how to set up the Change Data Capture (CDC) feature of Onehouse to enable continuous synchronization of data from an OLTP database into a data lakehouse. We will be using Amazon RDS PostgreSQL as our example source database.

Integrate Snowflake with Onehouse

This guide helps users integrate the Snowflake Data Cloud with a fully managed data lakehouse from Onehouse.

Integrate Amazon Athena with your Onehouse Lakehouse

This guide shows how to seamlessly integrate Amazon Athena with your Onehouse Managed Lakehouse. This will allow you to power serverless analytics at scale on top of the data in your Lakehouse.

Ingest Data from your DynamoDB Tables into the Lakehouse using Kafka

This guide will show how to ingest data from DynamoDB Tables into your Onehouse Managed Lakehouse using Onehouse's deep integration with Kafka.

Cross-region Hudi Disaster Recovery using Savepoints

This guide provides a pattern for creating a cross-region disaster recovery solution for Hudi™ using savepoints - enabling highly resilient lakehousees.

AWS Lake Formation and Onehouse Integration Guide

Gain maximum value from your data lakehouse while ensuring robust security and tailored access control.

Build a Sagemaker ML Model on a Onehouse Data Lakehouse

Integrate the Onehouse Universal Data Lakehouse with Amazon Sagemaker to build machine learning models in near real-time.

Ingest PostgreSQL CDC Data into the Data Lakehouse using Onehouse

Replicate your operational PostgreSQL database to the Onehouse Universal Data Lakehouse with up-to-the-minute data.

Database Replication into the Lakehouse with Onehouse's Confluent CDC Source

Integrate Confluent and Onehouse to seamlessly replicate operational databases in near real-time.

Workshop

Building an Open Lakehouse with Apache Hudi™ & Presto

This hands-on workshop introduces participants to building an open lakehouse architecture using Apache Hudi with Presto as the query engine. Attendees will learn to create Hudi tables, ingest, run snapshot and read-optimized queries, and sync tables with a catalog (Hive Metastore).

Building an Open Data Lakehouse on AWS S3 with Apache Hudi & Presto

The workshop will leverage TPC-DS dataset in volume of 10 GB to demonstrate the various capabilities of read and write with Hudi™ and Presto. The dataset will be made available at a common S3 location accessible to workshop attendees.

Case Studies

Conductor Transforms Data Architecture with Onehouse's Universal Data Lakehouse

Learn how Conductor eliminated complex Apache Spark management, reduced query times by >75%, and freed their engineering team to focus on innovation by transforming their data infrastructure with Onehouse.

Olameter Harnesses the Power of a Fully Managed Universal Data Lakehouse

Olameter turned to Onehouse to bring years of historical XML data into a fully managed, open data lakehouse. They cut data ingestion and processing times by 10x, powering ML models and adding value to their data pipelines.

NOW Insurance’s Data Journey With Onehouse: Streamlining For Growth

NOW Insurance is a rapidly growing pioneer in the insurtech space. Read about why they chose the Universal Data Lakehouse architecture - and partnered with Onehouse to make it happen, fast.

Overhauling Data Management at Apna

Apna is the largest and fastest-growing site for professional opportunities in India. Read on to learn how they rearchitected their data infrastructure to move from daily batch workloads to near real-time insights about their business while reducing costs.

The Journey To The Universal Data Lakehouse

Apna, Notion, Uber, Walmart, Zoom. What do these companies have in common? Aside from their businesses generating massive volumes of data - at high velocity - all of their teams have chosen the universal data lakehouse as a core component of their data stack and pipelines.

OpenXData, May 21, 2025

Adopting a 'horses for courses' approach to building your data platform

Today's data platforms too often start with an engine-first mindset, picking a compute engine and force-fitting data strategies around it. This approach seems like the right short-term decision, but given the gravity data possesses, it ends up locking organizations into rigid architectures, inflating costs, and ultimately slowing innovation.

Unity Catalog and Open Table Format Unification

Open table formats, including Delta Lake, Apache Hudi™, and Apache Iceberg™, have become the leading industry standards for Lakehouse storage.

Not Just Lettuce: How Apache Iceberg™ and dbt Are Reshaping the Data Aisle

The recent explosion of open table formats like Iceberg, Delta Lake, and Hudi has unlocked new levels of interoperability, allowing data to be stored and accessed across a growing range of engines and environments. However, this flexibility also introduces complexity, making it more challenging to maintain consistency, quality, and governance across teams and platforms.

From Kafka to Open Tables: Simplify Data Streaming Integrations with Confluent Tableflow

Modern data platforms demand real-time data—but integrating streaming pipelines with open table formats like Apache Iceberg™, Delta Lake, and Apache Hudi™, has traditionally been complex, expensive, and risky.

Building a Data Lake for the Enterprise

In this talk, I will go over some of the implementation details of how we built a Data Lake for Clari by using a federated query engine built on Trino & Airflow while using Iceberg as the data storage format.

An Intro to Trajectory Data for AI Agents

AI agents need more than just language - they need to act. This talk introduces trajectory data - an emerging class of data used to training LLM agents.

OneLake: The OneDrive for data

OneLake eliminates pervasive and chaotic data silos created by developers configuring their own isolated storage. OneLake provides a single, unified storage system for all developers.

To Build or Buy: Key Considerations for a Production-grade Data Lakehouse Platform

The data lakehouse architecture has made big waves in recent years. But there are so many considerations. Which table formats should you start with? What file formats are the most performant? With which data catalogs and query engines do you need to integrate? To be honest, it can become a bit overwhelming. But what data engineer doesn't like a good technical challenge? This is where it sometimes becomes a philosophical decision of build vs buy.

Powering Amazon Unit Economics at Scale Using Apache Hudi™

Understanding and improving unit-level profitability at Amazon's scale is a massive challenge, one that requires flexibility, precision, and operational efficiency.

Scaling Multi-modal Data using Ray Data

In the coming years, use of unstructured and multi-modal data for AI workloads will grow exponentially. This talk will focus on how Ray Data effectively scales data processing for these modalities across heterogeneous architectures and is positioned to become a key component of future AI platforms

Apache Gluten: Revolutionizing Big Data Processing Efficiency

Apache Gluten™ (incubating) is an emerging open-source project in the Apache software ecosystem. It's designed to enhance the performance and scalability of data processing frameworks such as Apache Spark™.

System level security for enterprise AI pipelines

As the adoption of LLMs continues to expand, awareness of the risks associated with them is also increasing. It is essential to manage these risks effectively amidst the ongoing hype, technological optimism, and fear-driven narratives.

AI Agents for ETL/ELT Code Generation: Multiply Productivity with Generative AI

This talk delves into the revolutionary potential of AI agents, powered by generative AI and large language models (LLMs), in transforming ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) processes.

The Trifecta of Tech: Why Software, Data, and AI Must Work Together to Create Real Value

In today’s rush to adopt AI, many organizations overlook a critical truth: value doesn’t come from AI alone—it comes from the powerful combination of software engineering, data engineering, and AI/ML engineering. In this fast-paced, 15-minute talk, Nisha Paliwal draws on 25+ years of experience in banking and tech to unpack the "Trifacta" that fuels real transformation.

Data as Software and the programmable lakehouse

This talk introduces "Data as Software," a practical approach to data engineering and AI that leverages the lakehouse architecture to simplify data platforms for developers. By leveraging serverless functions as a runtime and Git-based workflows in the data catalog, we can build systems that makes it exponentially simpler for data developers to apply familiar software engineering concepts to data, such as modular, reusable code, automated testing (TDD), continuous integration (CI/CD), and version control.

A Flexible, Efficient Lakehouse Architecture for Streaming Ingestion

Zoom went from a meeting platform to a household name during the COVID-19 pandemic. That kind of attention and usage required significant storage and processing to keep up. In fact, Zoom had to scale their data lakehouse to 100TB/day while meeting GDPR requirements.

Bringing the Power of Google’s Infrastructure to your Apache Iceberg™ Lakehouse with BigQuery

Apache Iceberg™ has become a popular table format for building data lakehouses, enabling multi-engine interoperability. This presentation explains how Google BigQuery leverages Google's planet-scale infrastructure to enhance Iceberg, delivering unparalleled performance, scalability, and resilience.

Open Source Query Performance - Inside the next-gen Presto C++ engine

Presto (https://prestodb.io/) is a popular open source SQL query engine for high performance analytics in the Open Data Lakehouse. Originally developed at Meta, Presto has been adopted by some of the largest data-driven companies in the world including Uber, ByteDance, Alibaba and Bolt. Today it’s available to run on your own or through managed services such as IBM watsonx.data and AWS Athena.

Moving fast and not causing chaos

Data engineering teams often struggle to balance speed with stability, creating friction between innovation and reliability. This talk explores how to strategically adapt software engineering best practices specifically for data environments, addressing unique challenges like unpredictable data quality and complex dependencies.

How Our Team Used Open Table Formats to Boost Querying and Reduce Latency for Faster Data Access

In this talk, Amaresh Bingumalla shares how his team utilized Apache Hudi to enhance data querying on blob storages such as S3. He describes how Hudi helped them cut ETL time and costs, enabling efficient querying without taxing production RDS instances.

ODBC Takes an Arrow to the Knee

For decades, ODBC/JDBC have been the standard for row-oriented database access. However, modern OLAP systems tend instead to be column-oriented for performance - leading to significant conversion costs when requesting data from database systems. This is where Arrow Database Connectivity comes in!

Panel: The Rise of Open Data Platforms

The rise of open data platforms is reshaping the future of data architectures. In this panel, we will explore the evolution of modern data ecosystems, with a focus on lakehouses, open query engines, and open table formats.

Cross paradigm compute engine for AI/ML data

AI/ML systems require realtime information derived from many data sources. This context is needed to create prompts and features. Most successful AI models require rich context from a vast number of sources.

Scale Without Silos: Customer-Facing Analytics on Open Data

Customer-facing analytics is your competitive advantage, but ensuring high performance and scalability often comes at the cost of data governance and increased data silos. The open data lakehouse offers a solution—but how do you power low-latency, high-concurrency queries at scale while maintaining an open architecture?

Data Mesh and Governance at Twilio

At Twilio, our data mesh enables data democratization by allowing domains to share and access data through a central analytics platform without duplicating datasets—and vice versa. Using AWS Glue and Lake Formation, only metadata is shared across AWS accounts, making the implementation efficient with low overhead while ensuring data remains consistent, secure, and always up to date. This approach supports scalable, governed, and seamless data collaboration across the organization.

Open Data using Onehouse Cloud

If you've ever tried to build a data lakehouse, you know it's no small task. You've got to tie together file formats, table formats, storage platforms, catalogs, compute, and more. But what if there was an easy button?

Stay in the know

Be the first to hear about news and product updates

.webp)

.webp)

.webp)

.webp)

.jpg)

.webp)

.webp)